A lot of modern software is built on a little bargain: ship the feature, let reality find the bugs, and call the bruises feedback. For small startups building low-consequence products, that bargain is often not just normal but rational. Humans are expensive. Time is short. The product is still trying to prove it deserves to exist. Some duct tape belongs in that phase.

The Cursor Key That Entered the Body Count

Therac-25 is still the story that should sober up a room. Between June 1985 and January 1987, six known accidents involved massive overdoses from the Therac-25 radiation therapy machine. This was not folklore. Nancy Leveson and Clark Turner documented it in painful detail from public records and agency material.

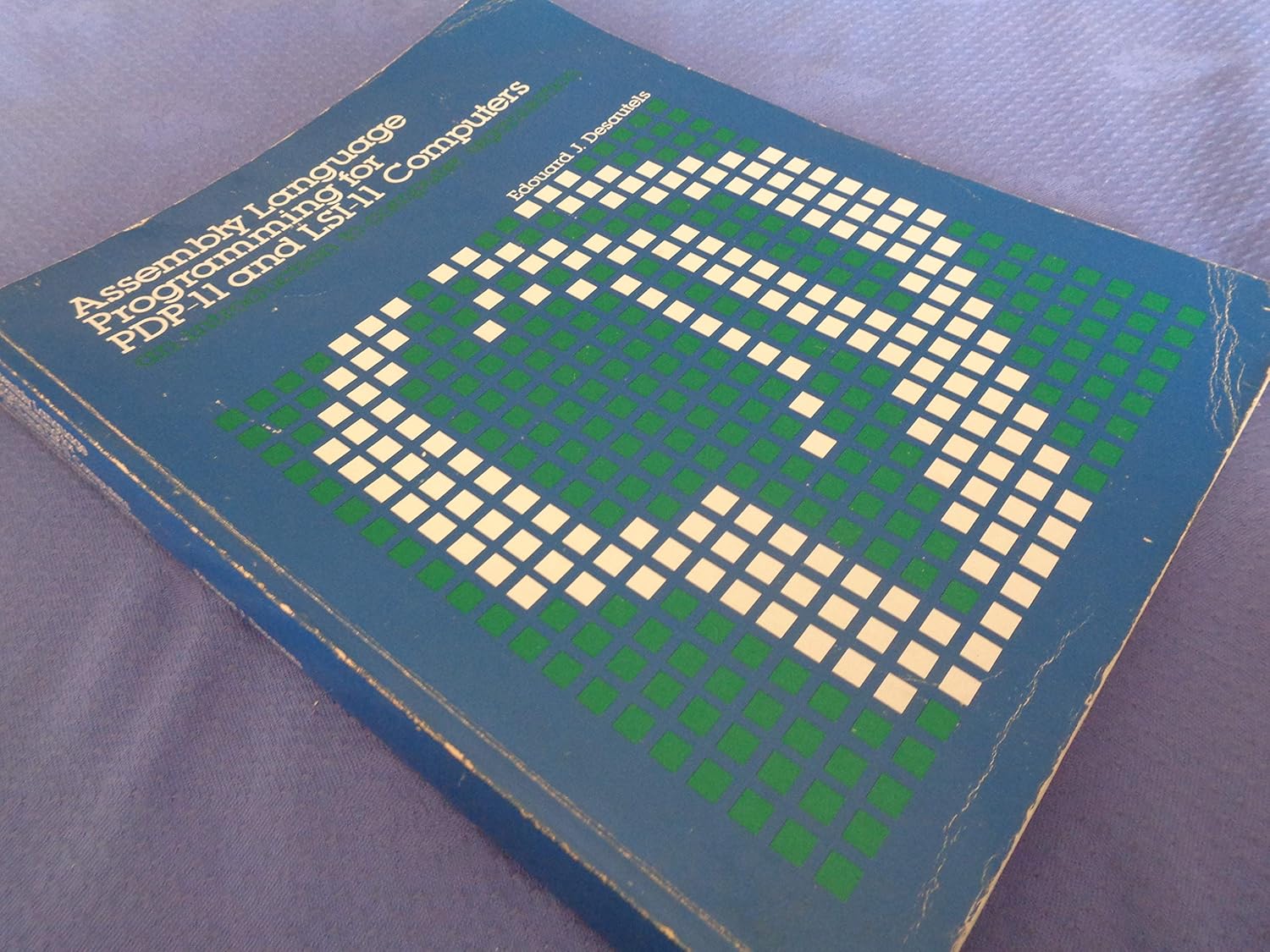

The software was developed by a single person over several years in PDP-11 assembly language. It ran a custom real-time executive, reused older code, shared memory between tasks, and left

synchronization flimsy enough that the investigators wrote the sentence in plain English:

a potential race condition is set up

.

One fatal path depended on the operator entering treatment data, moving to the command line,

pressing the cursor-up key to edit the mode, and returning fast enough. Leveson and Turner say

the Tyler error occurred when the operator did that all within 8 seconds

. That was enough

for the machine state to drift out of reality. In response, AECL literally told hospitals to

disable the key: remove the key cap, tape over the switch, and re-enter the whole prescription.

This is the part that should make every engineer a little sick. The bug was not a gothic mystery. It was not quantum noise. It was not an impossibly rare cosmic edge case. It was a race condition, shared variables without real synchronization, and a user-interface shortcut in a machine that fired ionizing radiation into human bodies. People received doses so extreme that some injuries were incompatible with life.

Some Software Ships Features. Some Software Ships Consequences.

Startups know this rhythm by heart. Ship fast. Patch later. Put the wobbling chair under the tablecloth and keep the demo moving. That can be perfectly fine when the failure mode is an ugly dashboard or a confused user clicking refresh twice.

But success has a nasty habit of collecting the bill later. The same shortcuts that helped the company survive month three start biting in year three. The codebase swells, the guarantees get ceremonial, and one day the team realizes it built a living organism out of deadlines and polite denial. Then the old bargain stops feeling clever.

And in high-consequence systems, the bargain collapses immediately. Right up until the bug does not duplicate a checkout email or break a settings page, but poisons a dosage, blinds a pilot, scrambles guidance, or quietly turns a safety system into theater. In those rooms, reliability stops being an aspiration and becomes the price of admission.

MISRA Started When Automotive Software Stopped Pretending To Be A Hobby

The automotive world arrived at the same cold conclusion from a different direction. MISRA began

in the early 1990s inside the UK government's SafeIT programme. Its 1994 vehicle

software guidelines were, by MISRA's own history, the first automotive industry approach to functional

safety years before ISO 26262 arrived to formalize the neighborhood.

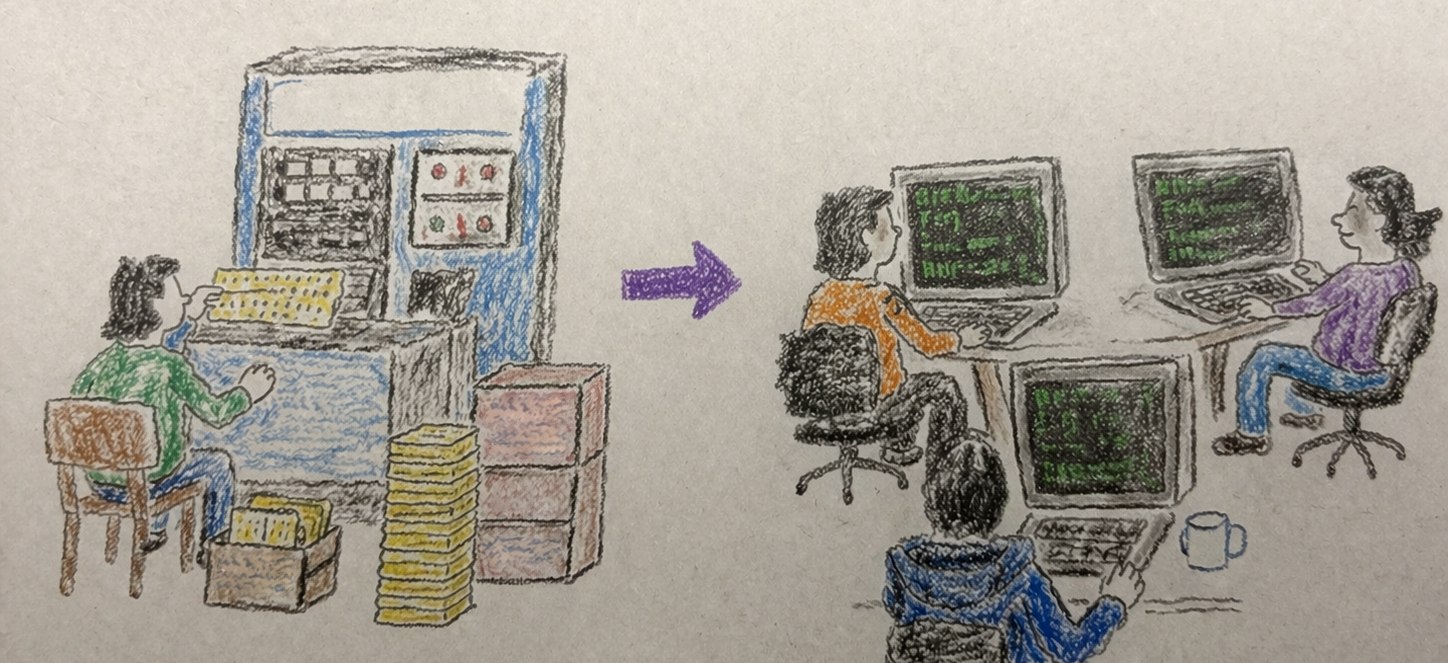

Then came the part people still misunderstand. MISRA was not born because engineers suddenly

discovered style. MISRA C was born because the industry was moving from assembly to C and needed

a restricted subset of a standardized programming language

. Ford and Rover had separate C

subset efforts. They merged them. The industry admitted the obvious: raw C gave too many ways to

be clever in a context where cleverness kept trying to become a funeral expense.

That is the real moral. Reliability culture does not ask whether a language is elegant. It asks whether the language leaves too many loaded guns lying on the kitchen table. If yes, you fence the language. You ban pieces. You demand subsets. You trade freedom for odds of survival.

Ada And SPARK Were Built To Make The Compiler More Suspicious Than The Team

Ada came out of a defense world drowning in dialects and trying to standardize real-time embedded work that actually had to survive deployment. AdaCore's own history is blunt about the mission: Ada was designed for reliable, safe, secure, high-integrity systems. Strong typing, contracts, explicit concurrency, runtime checks, profiles that narrow behavior, all of it comes from the same instinct. Make the language less permissive before the mission becomes less survivable.

SPARK pushes the knife deeper. It is a statically verifiable subset of Ada created for the most critical applications. The sales pitch is not charm. The sales pitch is proof. Prove absence of runtime errors. Prove memory safety properties. Prove contracts over all inputs instead of hoping your tests happened to wander across the right minefield.

Even the names tell the story. The Ravenscar Profile, proposed at the 1997 International Real-Time Ada Workshop in Ravenscar, narrows tasking so concurrency becomes predictable enough for certification, formal verification, and hard real-time use. This is how serious engineering talks when it has been burned enough times. Not more freedom. Fewer escape hatches.

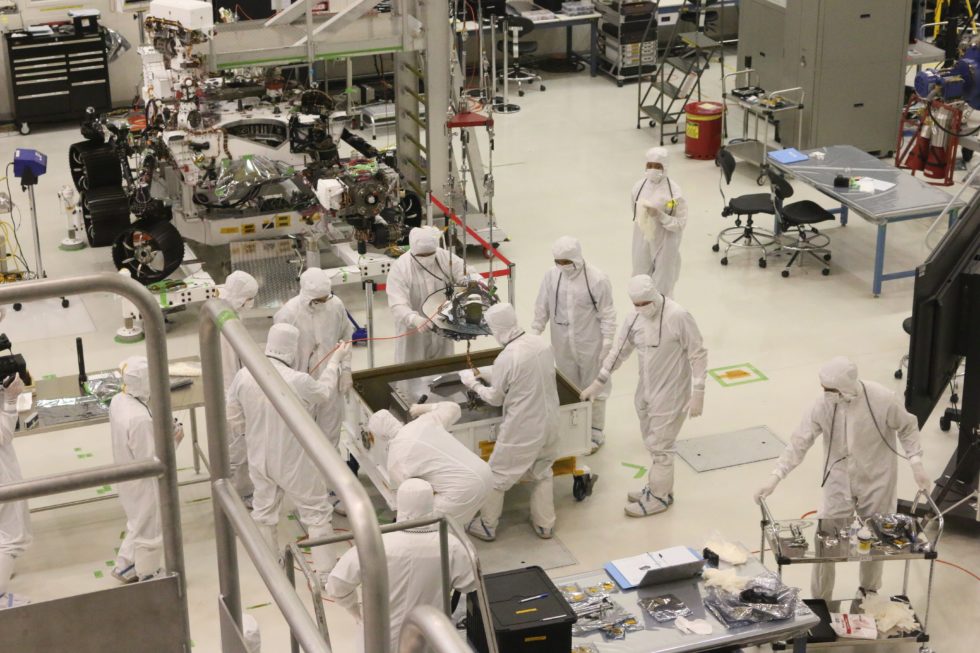

Rocket Software Did Not Start With Startup Energy

Long before people were romanticizing fast iteration, NASA's Space Shuttle program adopted

HAL/S, a higher-order language built for flight software. The 1974 NASA material is

almost endearing in how direct it is: HAL/S was developed for Shuttle flight software and meant

to satisfy virtually all of its requirements. Reliability was not a side quest. It was the

reason the language existed.

And HAL/S was not just dressed-up assembly. NASA highlighted language clarity, readability, modularity, protection of code and data, automatic checking under strict compiler rules, even linear algebra and a statement-level simulator. That is an unusual little detail worth noticing: when the mission is guidance and control, math stops being library flavor and becomes part of the language design.

Later aerospace and defense work leaned heavily on Ada and, for higher assurance, on subsets and proof-friendly profiles. The pattern stayed constant even as the tooling changed: narrow the behavior, expose the contracts, remove ambiguity where the machine can punish you for it.

The Language Alone Will Not Save You

There is another lesson buried in the wreckage. A better language is not holy water. Ariane 501 still died in 1996 because of software specification and design errors in the inertial reference system. ESA's inquiry board said the software analysis and testing were not adequate to reveal the fault. The launcher did not care that the engineers were working in a serious domain. It cared that a bad assumption survived into flight.

This matters because safety culture loves symbols. The approved language. The compliant subset. The framed process chart. None of those are magic. They help by shrinking the surface area for stupidity. They do not repeal stupidity. You still need system thinking, hardware interlocks, realistic testing, and the humility to assume your software can lie.

Some Teams Went Even Further And Stopped Hand-Writing So Much Of The Risk

Another branch of this family tree decided that even disciplined handwritten code was too slippery for some domains. Tools like Ansys SCADE push teams toward a formally defined modeling language, verification against requirements, and qualified code generation for standards like DO-178C, IEC 61508, EN 50128, and ISO 26262. In plain language: stop trusting informal translation steps any more than you have to.

That is the same old instinct again. If the path from intent to machine behavior is where people keep smuggling ambiguity, then tighten the path. If manual coding keeps giving error too much room to hide, move more of the argument into something analyzable, checkable, and certifiable.

What These Traditions Actually Understood

All of these traditions, HAL/S, MISRA, Ada, SPARK, Ravenscar, SCADE, were built around one ugly truth: in high-consequence systems, convenience is not an innocent preference. Convenience is a risk budget. Every expressive shortcut, every unclear side effect, every undefined corner, every hand-waved race condition is a bet placed with somebody else's skin in the pot.

That is why these ecosystems keep sounding harsher than mainstream developer culture. They are harsher. They were shaped by rockets, rail, defense, cars, and medical systems where a bug does not become a customer support ticket. It becomes an investigation.

The modern software world keeps asking how fast we can ship. The reliability world asks a better question first: what kind of room is this? If the room contains propulsion, radiation, braking, dosage, or guidance, then the language should act less like a canvas and more like a border guard.

Now The Slow, Meticulous Part Does Not Have To Be Human-Powered

This is the part that gets interesting now. Historically, the price of high assurance was a lot of careful human labor. More process. More repetition. More double work. More people spending long, serious hours making sure the code did not freeload its way past reality. That cost was real, and it is one reason so much of mainstream software accepted the bargain instead.

What we are trying to do with LLL is inherit the hard-won instincts of those traditions

without forcing teams to crawl back into the old productivity ceiling. The language can carry more

of the suspicion. The toolchain can carry more of the meticulous enforcement. AI can do more of the

slower, dutiful work that humans used to do by hand when the consequences were too serious to improvise.

So yes, it may be a little slower than the pure startup fantasy where everything ships on vibes and an apology backlog. But the point is that you no longer have to choose so crudely. You can move fast without worshipping corner-cutting. You can get much closer to the speed startups want and much closer to the discipline high-integrity systems require. That is the real promise here: less bargain, more leverage, and a language willing to do the paranoid part of the job.